Is Model Governance Slowing AI Adoption for Anti-Financial Crime?

Hawk’s latest report with Chartis found that 91% of financial institutions now encourage the use of AI for financial crime and compliance. While that momentum is pushing more models into production, it’s also exposing a gap most FCC teams weren’t prepared for: governing these models.

In this article, we unpack the findings from our report and what the data tells us about challenges in the AI model development and governance process.

→ Download the Banking Edition here

→ Download the Payment & FinTech Edition here

What the Data Tells Us About Model Governance Challenges in Financial Crime & Compliance

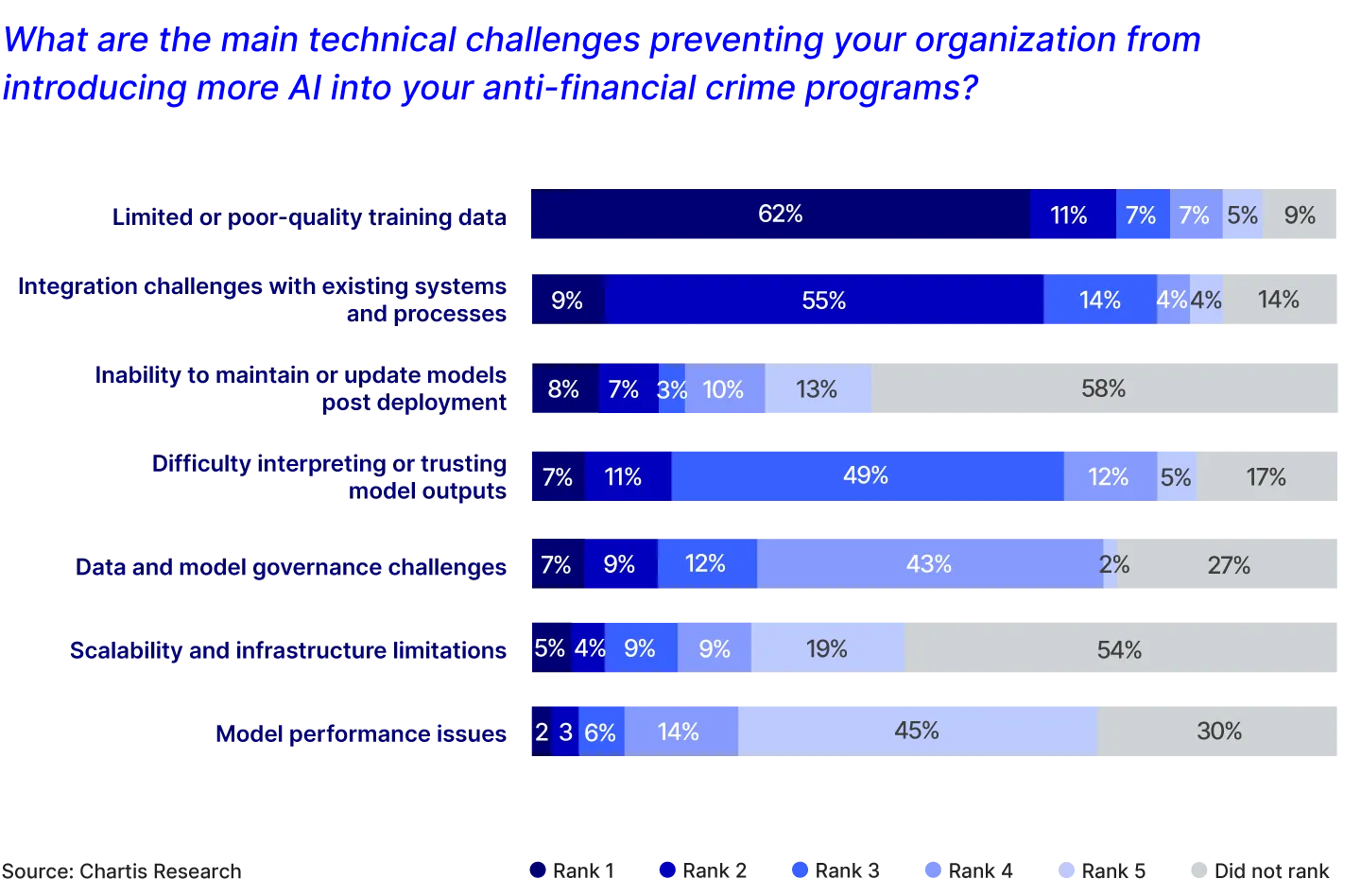

We asked 125 compliance and risk leaders at banks globally about the technical challenges holding them back from bringing more AI into their anti-financial crime programs.

Over half of those technical challenges were related to model governance. This shows that for many FCC teams, building a model is only the start. The real challenge lies in validating, operationalizing, governing, and optimizing models over time. And for a lot of teams, they don't have the resources to do it.

1. Limited or poor-quality training data (91% placed in top 5). This is the most cited challenge, and it sits at the foundation of everything. A model is only as good as the data it learns from. Without clean data and clear data lineage, models learn from noise as much as signal, generating false positives that could have been avoided. Regulators also expect FIs to demonstrate that the data underpinning their models is fit for purpose, making data quality a governance requirement as much as a technical one.

2. Integration challenges with existing systems and processes (86% placed in top 5). Even a well-built model fails if it can't connect to the systems that feed it data or act on its outputs. Integration gaps slow deployment, create manual workarounds, and introduce points of failure that are difficult to document and defend. For governance purposes, poor integration also makes it harder to maintain consistent model behavior.

3. Difficulty interpreting or trusting model outputs (83% placed in top 5). If FCC teams can't understand why a model flagged something, they can't act on it confidently, and they certainly can't explain it and defend responding decisions to an auditor or examiner. Explainability is a governance requirement. Models that operate as black boxes undermine the human oversight that effective financial crime controls depend on.

4. Data and model governance challenges (73% placed in top 5). Nearly half of respondents’ flag governance itself as a challenge. While 91% of banks say they've implemented AI to some degree, 73% of those same banks place model governance challenges in their top 5 concerns. This suggests that either there remains some uncertainty or pain even for institutions who currently use AI, or for the subset of respondents who aren't using AI, this could be one of the main barriers holding them back.

5. Model performance issues (70% placed in top 5). Model performance isn't static. Financial crime typologies evolve, customer behavior shifts, and a model that performed well at deployment can degrade without warning. Identifying and addressing that degradation is a core part of model governance, and without visibility into how performance changes over time, firms risk running controls that are no longer effective.

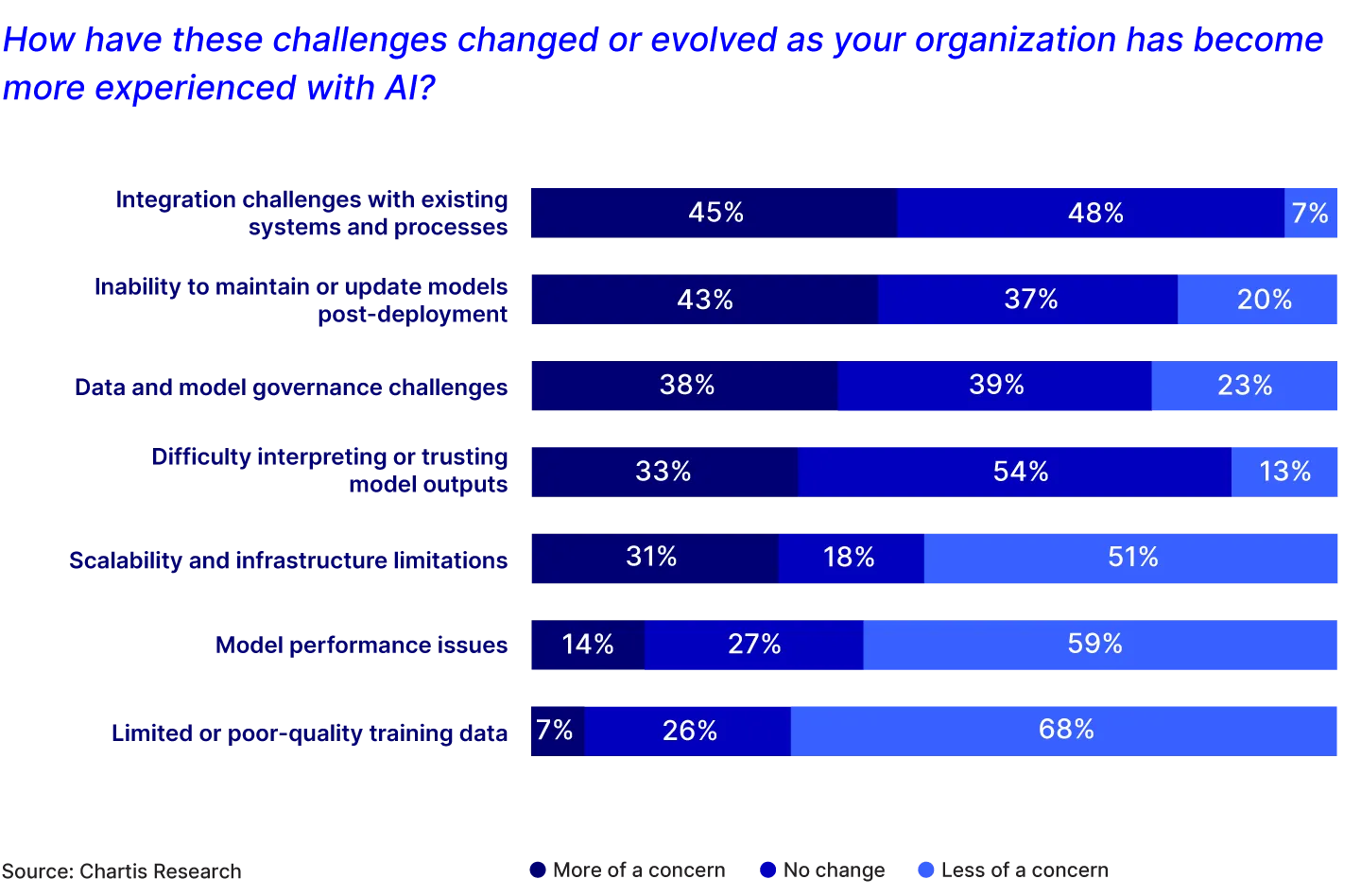

How AI Model Governance Challenges Evolve After Deployment

As FIs move from pilot to production with AI and the number of models deployed grows, the nature of the challenges shifts. Pre-deployment concerns about data quality and integration don't disappear. But when we asked respondents which challenges had increased since deploying AI, a new set of governance pressures came to the top.

43% report an increased concern around the inability to update models. Once a model is live, keeping it current requires coordination with data science teams. But these teams are often overstretched. The result? Model updates can be slow, infrequent, and often reactive. They can fail to catch new threats as behaviors change, and they can also become less effective at detecting the threats they were originally built to detect.

38% report an increased concern around data and model governance challenges. Sustaining governance across a growing model inventory is much harder than governing just one model. FCC teams need consistent documentation, version control, monitoring, and audit trails across every model. But most teams lack the right tooling to maintain governance at scale.

33% report an increased concern around interpreting or trusting model outputs. This challenge doesn't resolve after deployment. As models are updated or retrained, FCC teams need ongoing visibility into how and why decisions are being made, not just at launch, but throughout the model's lifecycle.

What If Model Governance Was Simpler?

Hawk’s research shows investment in AI for AML and fraud prevention is undoubtedly growing. But that investment only delivers results if the models are accurate, explainable, and maintained over time. Poor governance doesn't just create regulatory risk, it weakens confidence in AI itself, slowing adoption and limiting the return on what firms have already invested.

FCC teams that take a step back — cleansing their data, setting clear model objectives, starting with the right use case, being involved in development, and investing in governance before deployment — will be better positioned to respond faster to new threats, confidently explain their AI models, and build the institutional trust that makes further investment worthwhile. The FIs that get the most value from AI won't be those that deploy the fastest. They'll be the ones with strong model governance.

What Good Model Governance Actually Looks Like

1) Documenting every stage of model development. Model documentation is often treated as something that needs to be produced only when it's requested. However, capturing every decision, learning, and change throughout the development process makes it easy to generate that documentation on demand. Defensible model documentation should include the model's purpose, the data it consumes and where that data comes from, how performance is measured, and how the model changes over time, from versioning to business decisions that affect its design.

Hawk has built a solution to help financial crime and compliance teams with AI model lifecycle management. Analytics Studio generates documentation automatically as part of the platform’s workflow. Standardized templates ensure every model follows the same governance framework, alert samples enable effective SME model review, and comparison views show how new models measure up against leading models. The result is regulator-ready, downloadable model documentation on demand.

2) Building trust in model outputs. Effective model governance requires that teams using AI for anti-financial crime can understand and stand behind the decisions their models make. Without that ability, FCC teams are approving outputs they don't fully understand, and the institution itself is being asked to accept model decisions on trust. The industry's focus on explainable AI reflects this regulatory expectation.

Analytics Studio supports this with human-readable explanations, feature definitions, and decision evidence available by default. FCC teams can see not just what a model decided, but why - giving them the confidence to act on outputs and the evidence to defend those decisions when it matters.

3) Maintaining model effectiveness over time. An important aspect of model governance is ensuring models are effective after deployment. Financial crime patterns evolve, and a model trained on historical data will gradually lose relevance if not retrained soon enough. Leading FIs are building regular retraining cycles into their governance frameworks.

Hawk’s Analytics Studio enables compliance teams to retrain and update models independently, without relying on data scientists. Embedded financial crime expertise guides the process, so teams can act quickly when performance starts to degrade.

Analytics Studio: The Answer to AI Governance Challenges

Hawk built Analytics Studio to solve the model governance challenges that our banking and payment customers were facing . Across institutions of every size, the same pattern emerged: FCC teams were investing in AI but lacking the tools to govern it. For some, that meant wasted development cycles and models that never made it to production. For others, it meant models already live with no simple way to produce documentation when they needed it.

Analytics Studio puts model governance directly in the hands of FCC teams. Teams can retrain and update models independently, generate documentation and audit trails automatically, and get human-readable explanations with every model decision.

If you’re navigating model governance, learn how Analytics Studio works here.

-----------

Download your copy of the report and read all the findings:

→ Download the Banking Edition here

→ Download the Payment & FinTech Edition here